Recently

“Libraries Need More Freedom to Distribute Digital Books,” The Atlantic. A court case is likely to shape how we read books on smartphones, tablets, and computers in the future, and it doesn’t look good for libraries. March 30, 2023.

“Humane Ingenuity 51: Apple’s Vision + The Cost of Forever” — Revisiting the original design documents for the Macintosh computer to understand why we’re in a love/hate relationship with Apple, and a comparison of how much it costs to save a book and a web page forever. February 27, 2024.

Of Note

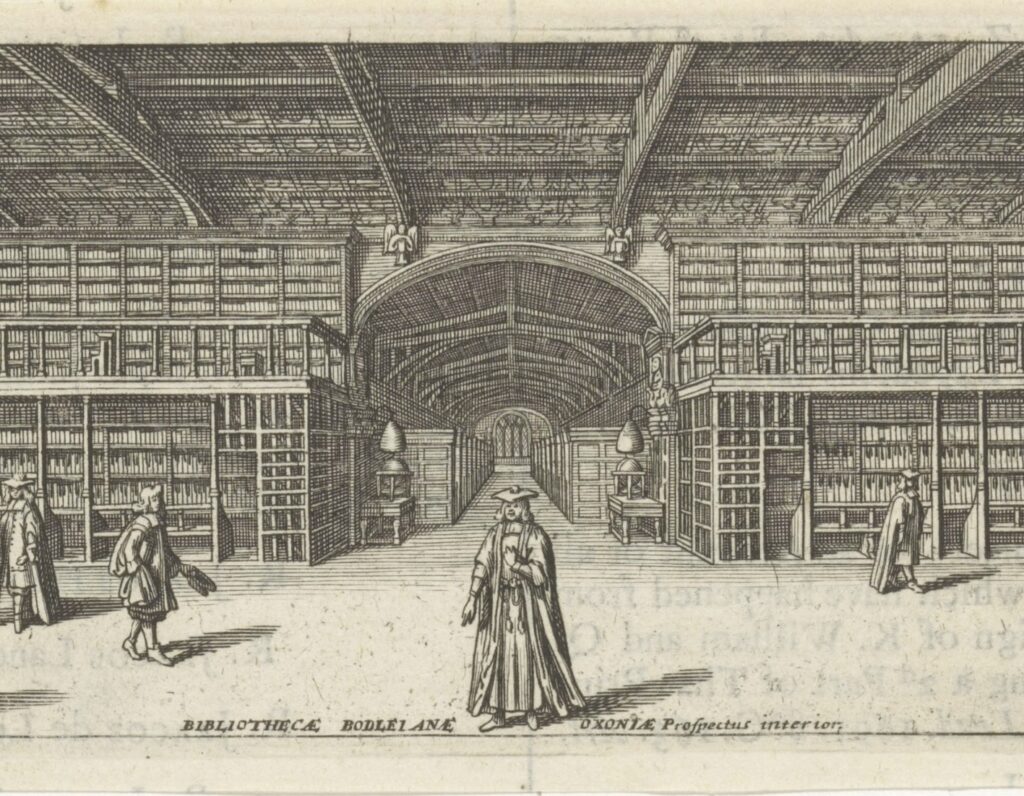

“The Books of College Libraries Are Turning Into Wallpaper,” The Atlantic. University libraries around the world are seeing precipitous declines in the use of the books on their shelves. Why is this happening and what does it mean for the future of libraries? May 26, 2019

“The Narrow Passage of Gortahig” — On the rugged western coast of Ireland, a critical road is a bit too narrow, and the locals like it that way. August 26, 2018

Subscribe to my newsletter

Humane Ingenuity looks in depth at technology that helps rather than hurts human understanding, and human understanding that helps us create better technology. New issues are sent to subscribers about once a month.