As we await the next generation of engineered writing, of tools like ChatGPT that are based on large language models (LLMs), it is worth pondering whether they will ever create truly great and unique prose, rather than the plausible-sounding mimicry they are currently known for.

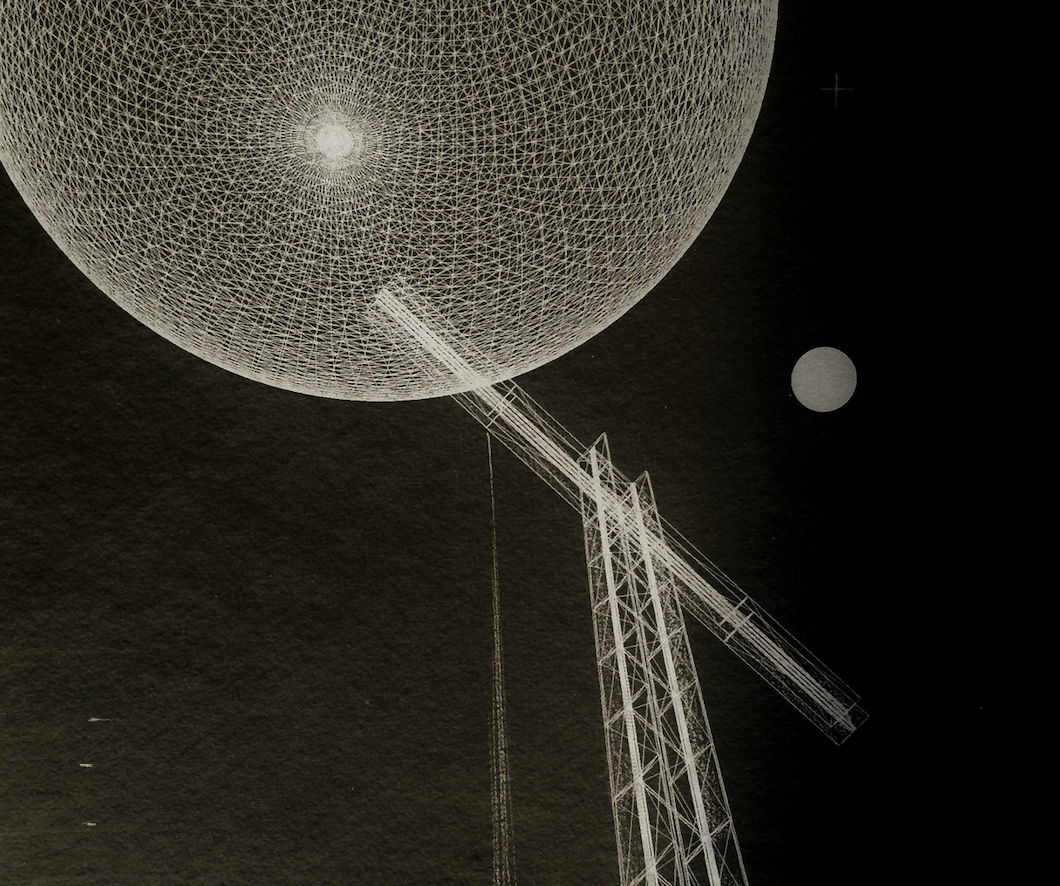

By preprocessing countless words and the statistical relationships between them from million of texts, an LLM creates a multidimensional topology, a complex array of hills and valleys. Into this landscape a human prompt sets in motion a narrative snowball, which rolls according to the model’s internal physics, gathering words along the way. The aggregated mass of words is what appears sequentially on the screen.

This is an impressive feat. But it has several major problems if you are concerned about writing well. First, a simple LLM has the same issue a pool table has: the ball will always follow the same path across the surface, in a predictable route, given its initial direction, thrust, and spin. Without additional interventions, an LLM will select the most common word that follows the prior word, based on its predetermined internal calculus. This is, of course, a recipe for unvaried familiarity, as the angle of the human prompt, like the pool cue, can overdetermine the flow that ensues.

To counteract this criticism and achieve some level of variation while maintaining comprehensibility, ChatGPT and other LLM-based tools turn up the “temperature,” an internal variable, increasing it from 0, which produces perfect fidelity to the physics, i.e., always selecting the most likely next word, to something more like 0.8, which slightly weakens the gravitational pull in its textspace, so that less common words will be chosen more frequently. This, in turn, bends the overall path of words in new directions. The intentional warping of the topological surface via the temperature dial enables LLMs to spit out different texts based on the same prompt, effectively giving the snowball constant tugs in more random directions than the perfect slalom course determined by the iron laws of physics. Turn the temperature up further and even wilder things can happen.

Yet writing well isn’t about using less frequent words or having more frequent tangents. Great writing forges alternative pathways with intentionality. Styles and directions are not shifted randomly, but as needed to strengthen one’s case or to jolt the reader after a span of more mundane prose. For instance, my writing style for this newsletter, although less serious and less formal than my academic writing style, nevertheless is prone to use the phrase “for instance” and the word “nevertheless.” My sentences tend to be longer than those you might encounter in more casual writing, and I generally avoid starting a sentence with “Anyway,” or ending a sentence with an exclamation point. But sometimes, to underscore my argument, I do use an exclamation point!

Anyway, dialing up the temperature creates variability, leading to different responses to the same prompt; an improvement. But this hack is only on the output side of the LLM; by the time the snowball is rolling around, those hills and valleys are already firmly sculpted by the preprocessing of a distinct slate of texts. In other words, the input of the LLM has already been determined. With many of the LLM-based tools we are encountering today, those corpora are incredibly large and omnivorous. ChatGPT is an indiscriminate generalist in what it has read, because it wants to be able to write on virtually any topic.

Here again, however, there is an obvious issue. Good writing isn’t just the selection and ordering of words, the output; good writing is the product of good reading. Writers aren’t indiscriminate generalists, but tend to be rather choosy and personal about what they read. As humans they also have a fairly limited reading capacity, which means that their styles are highly influenced by idiosyncratic reading histories, by their whim. Good readers can often discern which writers a writer has read, as little stylistic quirks pop up here and there — a recognizable artisanal blend, mixed with some individually developed ingredients. It is hard to see how great writing can come from a model that is a generalist, or from a prompt asking for “a story in the style of” just one writer, or even from an LLM trained on a discerning, highbrow corpus, although each of those might have interesting, skillful outputs.

If we want our LLMs to be truly variable and creative, we would have to train the models not on a mass of texts or even the texts of a set of “good writers” (if we could even agree on who those are!), but on a limited, odd array of texts one human being has ingested over their lifetime, which they think about in relationship to their experience of life itself, and which they process and transform over time. And this begins to sound a lot like a story in the style of Jorge Luis Borges, in which a machine seeks to become a writer to impress human beings, and so it asks someone to assemble a library of great works, and the machine waits patiently for years while its human assistant, engrossed by what they are reading, piles up books next to a comfortable chair.

Subscribe to the Humane Ingenuity newsletter:

(Alice Baber,

(Alice Baber,

(Roger Brown,

(Roger Brown,

(Recliners + the scrolling text of a book on the ceiling, yes please.)

(Recliners + the scrolling text of a book on the ceiling, yes please.)